Description

MCP — Message Control Platform

Overview

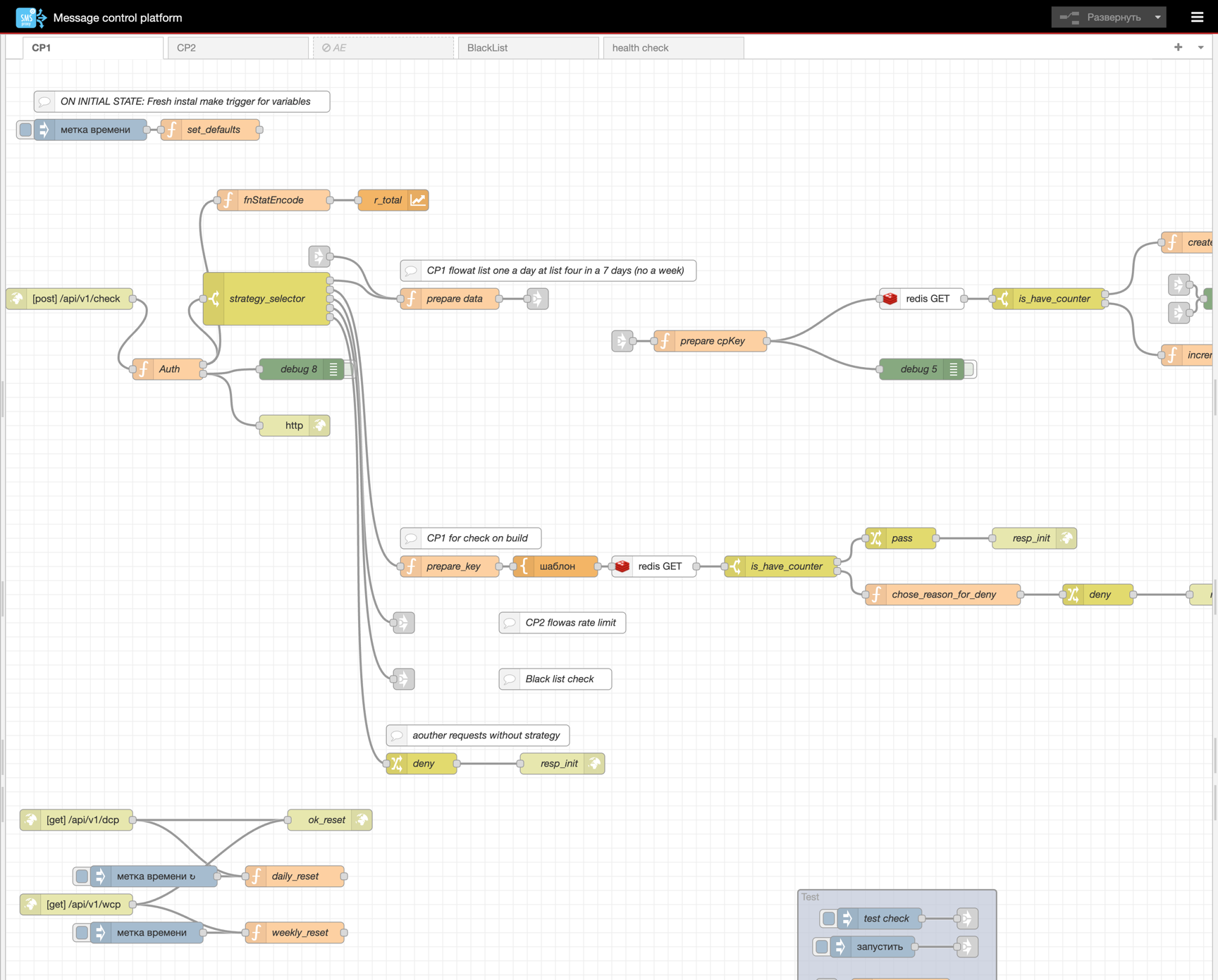

MCP (Message Control Platform) is a flexible, visual message filtering and contact policy enforcement engine built on top of Node-RED.

It acts as the control plane of the messaging infrastructure — every outbound message is evaluated against a configurable set of policy workflows before it is allowed to reach the transmission layer (SMPP Proxy).

MCP provides a no-code/low-code approach to building sophisticated contact policy rules. Using the Node-RED visual flow editor, operators can design, modify, and deploy filtering strategies in real time — without redeploying backend services or writing application code.

Key value: MCP gives your messaging operations a powerful, real-time policy engine with a visual interface — enabling rapid iteration on business rules, compliance requirements, and contact frequency controls.

Architecture at a Glance

| Component | Role |

|---|---|

| Node-RED | Visual flow engine — all policy logic is defined as workflows (flows) |

| Redis | High-performance state store for counters, blacklists, and deduplication keys |

| S3 / MinIO | Object storage for blacklist source files (CSV) |

| Prometheus | Metrics exporter for monitoring request throughput per strategy |

MCP is fully containerized and horizontally scalable. Multiple Node-RED instances share the same flow definitions and connect to a shared Redis and S3 backend.

How It Works

Every incoming check request hits a single HTTP API endpoint. The request is authenticated, then routed to the appropriate strategy workflow based on the strategy field in the request body. Each strategy implements its own filtering logic.

Request Lifecycle

The response is a JSON object with two fields:

state— the reason code (e.g.ok,daily_cp,weekly_cp,blacklist,duplicate)pass— boolean indicating whether the message is allowed to proceed

Available Strategies (Workflows)

1. CP1 — Frequency-Based Contact Policy

Purpose: Limits the number of messages a single recipient (daddr) can receive within daily and weekly windows.

How it works:

- A Redis key

daddr:<phone_number>stores a JSON counter object with fieldsd(daily count) andw(weekly count). - On the first message to a new recipient, a counter is created with

d=1, w=1and a configurable TTL. - On subsequent messages, counters are incremented and checked against limits:

- If the daily limit is exceeded → the message is denied with state

daily_cp - If the weekly limit is exceeded → the message is denied with state

weekly_cp

- If the daily limit is exceeded → the message is denied with state

- If both limits are within bounds, the counter is updated (with preserved TTL) and the message is allowed.

Counter Reset:

- Daily counters are reset to zero every day at midnight via a scheduled cron job.

- Weekly counters are reset to zero every Monday at midnight.

- Resets are performed atomically using Lua scripts executed directly in Redis, processing keys in batches for efficiency.

- Manual reset is also available via HTTP endpoints (

/api/v1/dcpfor daily,/api/v1/wcpfor weekly).

2. CP1 On-Build — Pre-flight Contact Policy Check

Purpose: A read-only variant of the CP1 strategy used for pre-validation during message preparation.

How it works:

- Looks up the same

daddr:<phone_number>key in Redis. - If no counter exists → the message is allowed (

pass: true). - If a counter exists → checks whether daily or weekly limits have already been reached, and returns the appropriate denial reason.

- Does not modify any counters — purely a read operation.

This strategy enables upstream services to check whether a message would be blocked before actually submitting it.

3. CP2 — Deduplication Policy

Purpose: Prevents duplicate messages to the same recipient within a configurable time window.

How it works:

- A Redis key

dedup:<phone_number>is checked. - If the key does not exist → the message is new:

- A deduplication marker is created with a TTL (time-to-live window).

- The message is allowed (

pass: true).

- If the key exists → the message is a duplicate:

- The message is denied with state

duplicate. - An HTTP 429 (Too Many Requests) response is returned.

- The message is denied with state

This effectively creates a cooldown window per recipient — no two messages to the same number are allowed within the configured interval.

4. Blacklist — Contact Blacklist Check

Purpose: Blocks messages to phone numbers that appear on a centrally managed blacklist.

How it works:

- A Redis key

blacklist:<phone_number>is checked. - If the key does not exist → the number is not blacklisted, and the message is allowed.

- If the key exists → the number is blacklisted, and the message is denied with state

blacklist.

Blacklist Management:

The blacklist is maintained as CSV files stored in an S3-compatible object storage (MinIO). The blacklist lifecycle is fully automated:

- Load — CSV files are listed and read from the S3 bucket

contact-policy/black-list/. - Transform — CSV rows are split, parsed, and formatted into Redis SET commands with a transaction key and TTL.

- Store — All entries are written to Redis in a single atomic MULTI transaction.

- Clean — After loading the new blacklist, old entries (from previous loads) are identified and deleted based on the transaction key, ensuring the blacklist is always current.

Auto-Renewal: A configurable cron schedule triggers automatic blacklist refresh from S3, ensuring the blacklist stays synchronized with the source of truth.

5. Health Check

Purpose: A simple liveness probe endpoint.

- Endpoint:

GET /api/v1/health - Response:

200 OK

Used for container orchestration health monitoring (Docker, Kubernetes).

API Reference

| Method | Endpoint | Description |

|---|---|---|

POST | /api/v1/check | Main policy check endpoint — evaluates message against the specified strategy |

GET | /api/v1/health | Health check / liveness probe |

GET | /api/v1/dcp | Trigger manual daily counter reset |

GET | /api/v1/wcp | Trigger manual weekly counter reset |

Policy Check Request

POST /api/v1/check

Header: x-api-auth: <api_key>

Request Body:

{

"task_id": "49",

"transaction_id": "1C439986-E1E9-4697-A127-4079EF1180D9",

"app_id": 1,

"esme": 100,

"saddr": "SENDER_NAME",

"daddr": "998901234567",

"message": "Hello",

"strategy": "cp1"

}

Supported strategies: cp1, onbuild_cp1, cp2, blacklist

Response (Allowed)

{

"state": "ok",

"pass": true

}

Response (Denied)

{

"state": "daily_cp",

"pass": false

}

Possible state values: ok, daily_cp, weekly_cp, duplicate, blacklist, unknown_strategy

Observability

MCP exports Prometheus-compatible metrics for monitoring:

| Metric | Type | Description |

|---|---|---|

requests_total | Counter | Total number of policy check requests |

req_totl | Counter | Requests broken down by strategy label |

Metrics are accessible via the standard Node-RED Prometheus exporter endpoint.

Scalability

MCP is designed for horizontal scaling:

- Multiple Node-RED instances (contact-policy-0 through contact-policy-4) run the same flow definitions from a shared volume.

- All instances connect to a shared Redis backend for state consistency.

- An external load balancer distributes traffic across instances.

- Stateless request processing ensures any instance can handle any request.

Technology Stack

| Technology | Version | Purpose |

|---|---|---|

| Node-RED | 4.1.1 (Debian) | Visual flow engine |

| Redis | latest | State storage (counters, blacklists, dedup keys) |

| MinIO (S3-compatible) | — | Blacklist file storage |

| Prometheus Exporter | 1.0.5 | Metrics export |

| AWS S3 SDK | 3.x | S3 bucket integration |

| Aerospike | 6.4.0 | Alternative storage backend (experimental, disabled) |