System Architecture

System Architecture

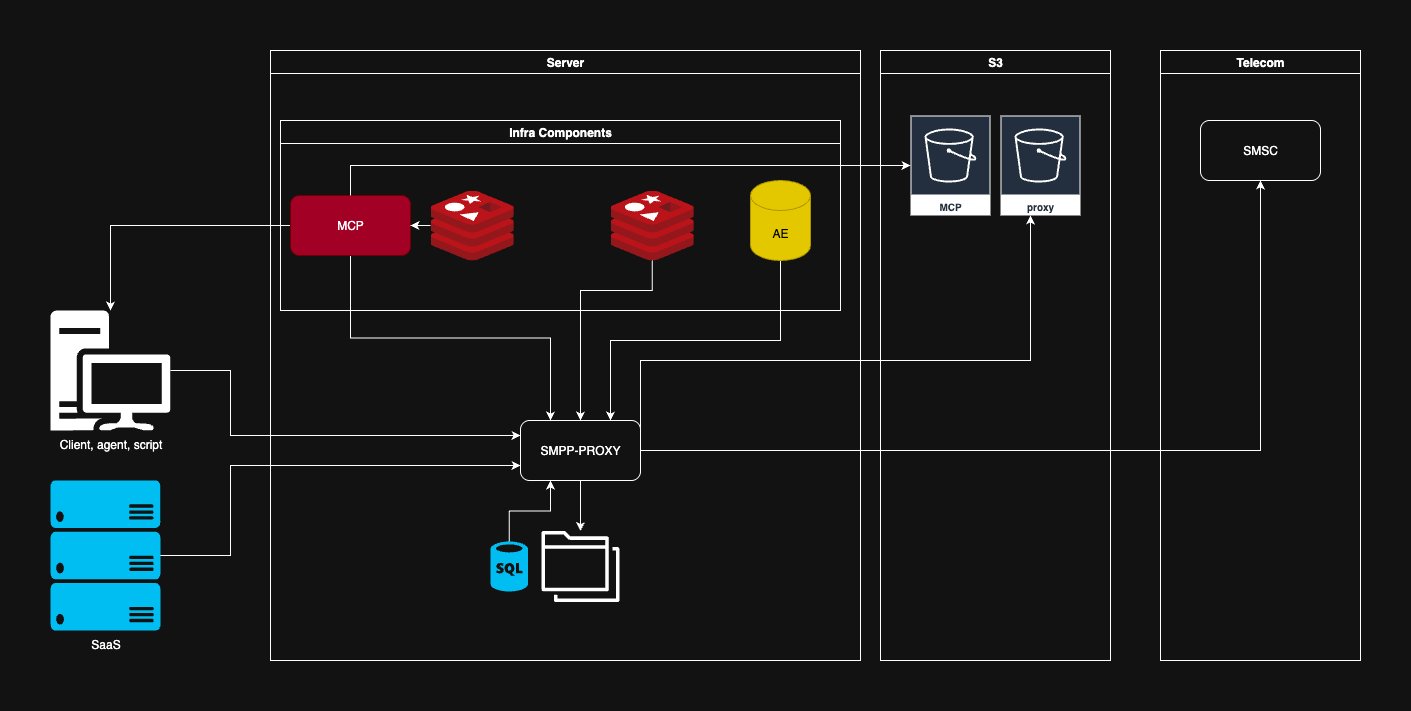

This section gives a high-level overview of how the platform is structured and how a message moves from a client application to the operator network. A detailed breakdown of each component's internals can be found in Concepts.

Platform Components

The platform is divided into four logical layers — client, application, storage, and telecom. The application layer runs in Docker and scales horizontally; the storage and telecom layers are external dependencies.

| Layer | Components | Role |

|---|---|---|

| Client | Scripts, agents, SaaS applications | Sources of SMS traffic |

| Application | SMS Gateway Proxy, MCP, Redis, Aerospike | Routing, policies, rate limiting, state |

| Storage | S3 / MinIO | Long-term CDR and log archive |

| Telecom | Operator SMSC | Final SMS delivery to the subscriber |

Key Services

SMS Gateway Proxy — data plane

The central component. Accepts HTTP and gRPC requests, maintains persistent SMPP v3.4 sessions to operators, segments long messages, tracks delivery receipts (DLR), and enforces rate limits.

Deployed in a Docker container, can run as multiple replicas sharing state via Redis.

→ Learn more: Main Components, Proxy Architecture

Message Control Platform (MCP) — control plane

A contact policy engine built on Node-RED. Validates every message before passing it to the Proxy:

- per-number frequency limits (CP1) — daily and weekly thresholds

- deduplication within a time window (CP2)

- filtering against blacklists from CSV files in S3/MinIO

MCP is the "second opinion" before the SMSC: even if an application has requested a send, MCP can block it based on business rules.

→ Learn more: Message Control Platform

Redis — distributed rate limiting

Stores L2 rate-limiter tokens and MCP counters. Without Redis, only the local L1 limiter inside a single Proxy instance is active — sufficient for a single-node deployment, but not for a cluster.

→ Learn more: Rate Limiting

Aerospike — message state

Persistent storage for the state of every message: transaction ID, status, timestamps, and delivery receipts. Aerospike was chosen for its high write throughput at low latency — critical because state is updated at every stage change throughout the pipeline.

S3 / MinIO — object storage

Not on the hot path of a message. Used for:

- exporting CDRs (Call Detail Records) to S3 on a regular schedule

- storing blacklist CSV files read by MCP

- archiving operational logs

S3-compatible API: AWS S3, MinIO, Wasabi, or any compatible service works.

SMPP client connections

The Proxy maintains multiple persistent SMPP v3.4 sessions to the operator's SMSC. Sessions are grouped into virtual pools, across which traffic is distributed using the Smooth Weighted Round-Robin algorithm.

If a link goes down, its load is automatically redistributed to the remaining links in the pool. A recovered link is added back once it passes a health check.

→ Learn more: Load Balancing, Link Health Monitoring

Message Flow

Every SMS sent passes through several stages before reaching the subscriber:

- Authentication — The Proxy validates the JWT token and

X-App-ID, and checks the IP against the SMPP link whitelist. - L1 rate limiter — A local in-process limiter constrains instantaneous throughput.

- L2 rate limiter — A distributed Redis-backed limiter coordinates throughput across all Proxy instances.

- Contact policy — MCP checks frequency limits, deduplication, and blacklists.

- Routing — The message is assigned to a specific SMPP link or load-balanced within a pool.

- SMPP submit_sm — The Proxy sends the PDU to the SMSC. Long messages are segmented automatically (GSM7 or UCS2).

- Aerospike persistence — The current status and

message_idare saved. - submit_sm_resp / DLR — The Proxy receives the acceptance acknowledgment and later a delivery receipt from the SMSC, and updates state in Aerospike.

- CDR export — CDR batches are periodically flushed to S3 for downstream analysis.

If a block is triggered at any stage, the client receives the appropriate HTTP status code (401, 403, 429) or gRPC status, and the message is not sent to the SMSC.

Deployment Model

A minimal single-node setup is suitable for testing and low-volume workloads:

- One host, four containers: Proxy, MCP, Redis, Aerospike

- Local volumes for config, logs, license, and user database

A production cluster looks like this:

- Multiple Proxy instances behind an L4 load balancer

- External Redis (Sentinel or Redis Cluster) — shared state for the L2 rate limiter

- External Aerospike cluster

- MCP as a separate pod with its own blacklists in S3

- All secrets (license, SMPP credentials, S3 credentials) via Kubernetes Secrets or Docker Secrets

→ Learn more: Infrastructure, Kubernetes Deployment

What's Next

- Quick Start — spin up a minimal environment in 5 minutes

- Platform Concepts — product overview of Message Center and the platform

- SMS Gateway Proxy Architecture — deep decomposition of components and their responsibilities

- Installation — production-ready deployment with volumes and backup